Cats Vs Dogs - The Google Image Relevancy Experiment

Does the relevancy of images on a landing page affect organic search performance? This is the question we sought to answer in our latest SEO experiment.

After the results from our previous duplicate image experiment showed that unique images on landing pages correlated with higher rankings in the search results, we next wanted to test what influence (if any) image relevancy had on the organic search results.

A Quick Note on Correlation Studies

Correlation studies are not perfect but one of the only ways to test single variables in a complex ecosystem like Google's search engine is by isolating that variable (as best as we possibly can) as the only factor that could influence the rankings within an experiment.

In the past, we have used completely new and made up keywords which returned no search results prior to our experiment to help isolate single variables. However, this method can risk limiting any effects from language processing algorithms (amongst others) that could be influencing search result rankings for real keywords/in actual SERPs. So, for this experiment, we looked at a real keyword.

It is important to note that doing this did introduce some risk of influences on the rankings outside of our control (e.g. real searchers finding and clicking on some experiment sites but not others in the search results). As our experiment sites weren’t ranking on the first few pages for the target keyword, we suspect the effects of such interactions was minimal/non-existent.

Hypothesis

SEOs are well aware that their content needs to be relevant to the keywords that they are targeting in order for it to rank as strongly as possible. And from the search engines side they need the results they rank top for any given keyword to be as relevant as possible otherwise searchers will seek out alternatives where they can find the information that they are looking for more quickly, efficiently and intuitively.

So, if the relevancy of your content is important in ranking your pages for their target keywords, what makes up relevancy? With images making up such an integral part of the experience using a website, we hypothesised that the contents of the images found on a page could play a key part in the perceived relevance of that page as a whole. And if this was something being looked at by a search engine, we wanted to find out if it played any part in the search rankings.

The results of our previous experiment already suggested that Google was looking at image duplication and, at least in part, using that information when deciding where to rank the experiment sites (in organic and image search). Next, we wanted to find out if they were using the actual image content in a similar way.

Methodology

From the outset, we concluded that there were a few key things to consider in setting up this experiment:

- Finding and targeting a keyword which our experiment sites could realistically rank for without any historical or external signals (e.g. links) and relatively little on-site content.

- Ensuring that Google could identify the images on half of the experiment sites as being more relevant to the target keyword than the images on the other half of the sites if they chose to do so.

- Minimising outside ranking factors that could influence the search results/rankings of our experiment sites and make our results less reliable.

Finding The Target Keyword

Given the nature of the experiment, we decided to focus on the concept of cats and dogs, hoping that the clear differences between the two types of animals would allow us to set up half our experiment sites with obviously more relevant images than the other half.

From the results of our previous experiment we also knew that we would need to use unique images on every website which led to us narrowing things further and selecting the Dogue de Bordeaux breed specifically. With two Dogues de Bordeaux in the office (at the time) we would have no shortage of unique images to select from. Many of the Reboot team also had cats at home who could be photographed.

With the concept selected we then needed to find a keyword to target which our experiment websites would stand a chance of ranking anywhere in the index for. The keyword would have to be low enough in competition that we could be placed in the index despite having no historical or external signals and relatively little on-site content.

After some research, we decided to target the keyword Dogue de Bordeaux characteristics.

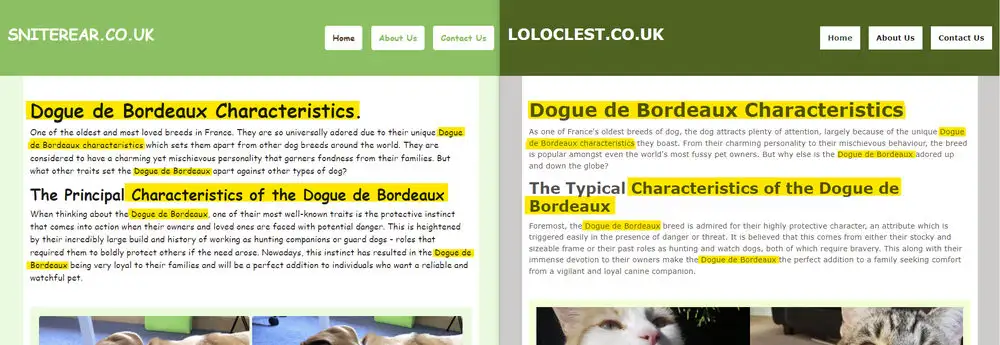

Establishing Image Relevancy on Half of The Experiment Sites

We knew that we wanted to test if the relevancy of images on a landing page would influence the rankings of that page for a predefined target keyword. Next, we needed to ensure that the images found on our experiment sites could be identified by Google as either more or less relevant to the target keyword if they decided to do so.

To do this we turned to Google’s Vision API which uses machine learning to understand and analyse images fed through it.

Whilst John Mueller has answered questions about Google Vision more recently, and even went as far as saying directly that it wasn’t currently being used in search, the tool still offered a good insight into what Google could understand about the images we intended to use on our test websites.

Our web development team used the Google Vision API to analyse over 300 unique images of our office dogs, Dexter and Lola, and a further 204 unique images of various cats belonging to Reboot staff.

We needed any dog image we used on the experiment sites to be clearly identified by Google Vision as not only an image containing a dog, but a specific dog breed (Dogue de Bordeaux). Any cat image used had to be identified as containing a cat and no dogs.

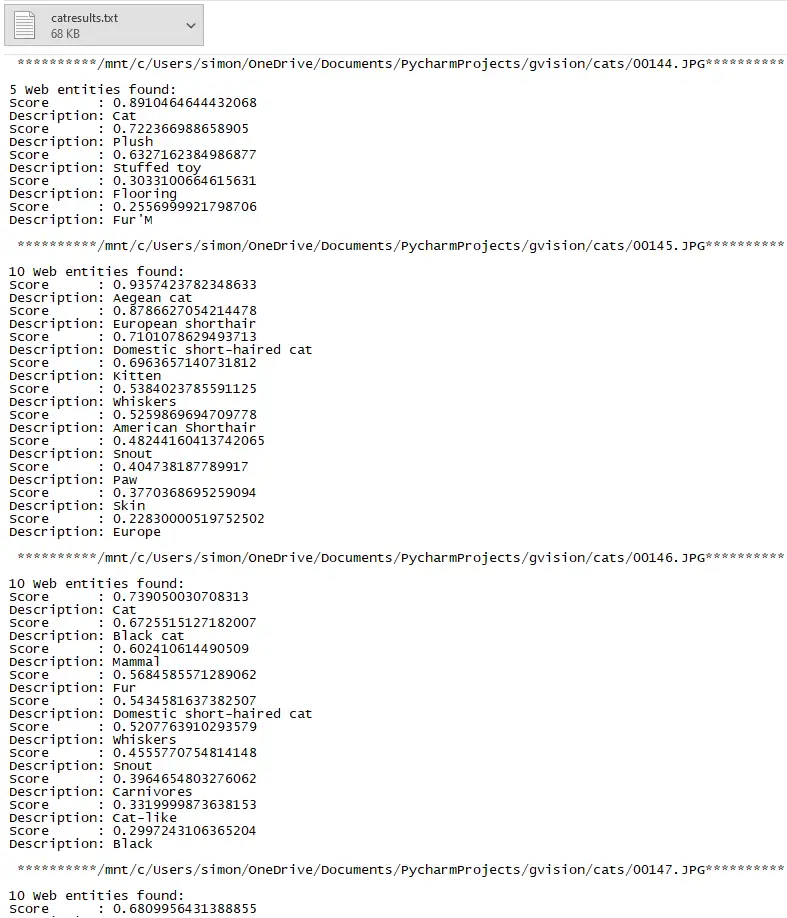

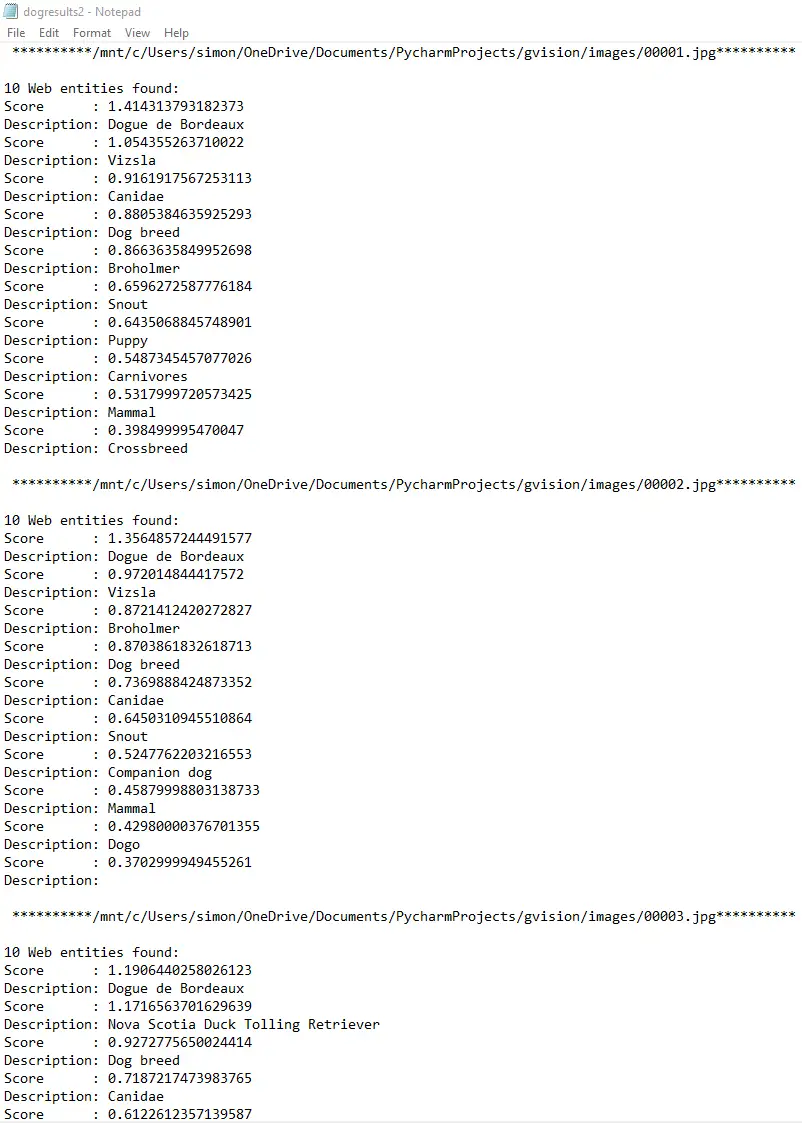

Using a custom python script we ran 504 unique cat and dog images through the Google Vision API and extracted the information provided to .txt files.

Results of running some of our cat images through the Google Vision API.

With these files, we could see the information that Google was pulling from each image and shortlist only those where they had identified the image as being conclusively about either the Dogue de Bordeaux breed or cats.

Some results from the Google Vision API after feeding it some of our Dogue de Bordeaux images.

As the screenshots above show, Google’s Vision API managed to pull an exceptional amount of information from each image, and even gave weights to the various things they found in each one.

Using this information and the weighting system already assigned to it, we shortlisted the images which Google was most confident was either of a Dogue de Bordeaux or a cat.

Doing this meant we could be more confident that they would be able to identify the dog images as more relevant for our target keyword than the cat ones if they wanted to.

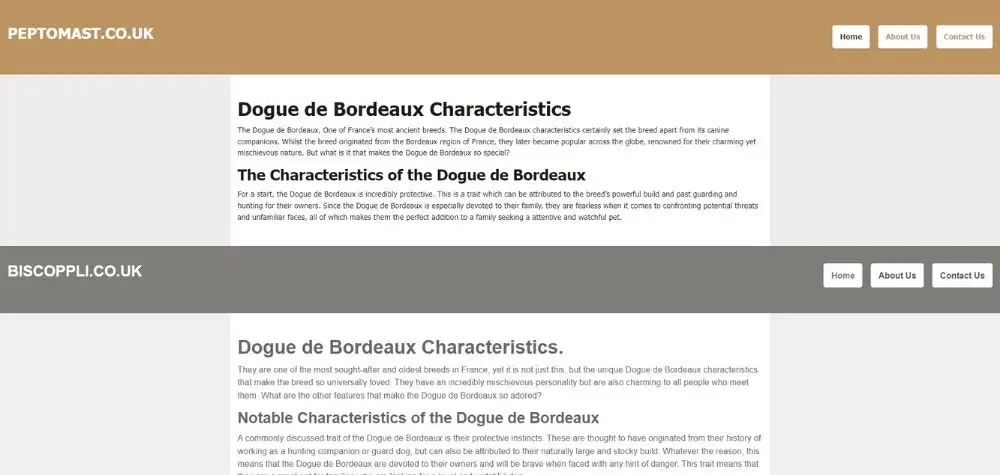

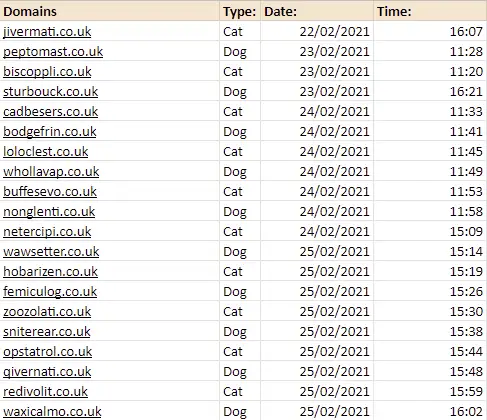

We would publish 20 websites in total, all with very similar content and equal speed/performance. 10 of the websites would contain only images of the Dogues de Bordeaux, and the other 10 would contain only images of cats.

The meta data was stripped from every image, file names were kept generic and no alt text was assigned so nothing besides the image content could identify whether it was a cat or a dog image.

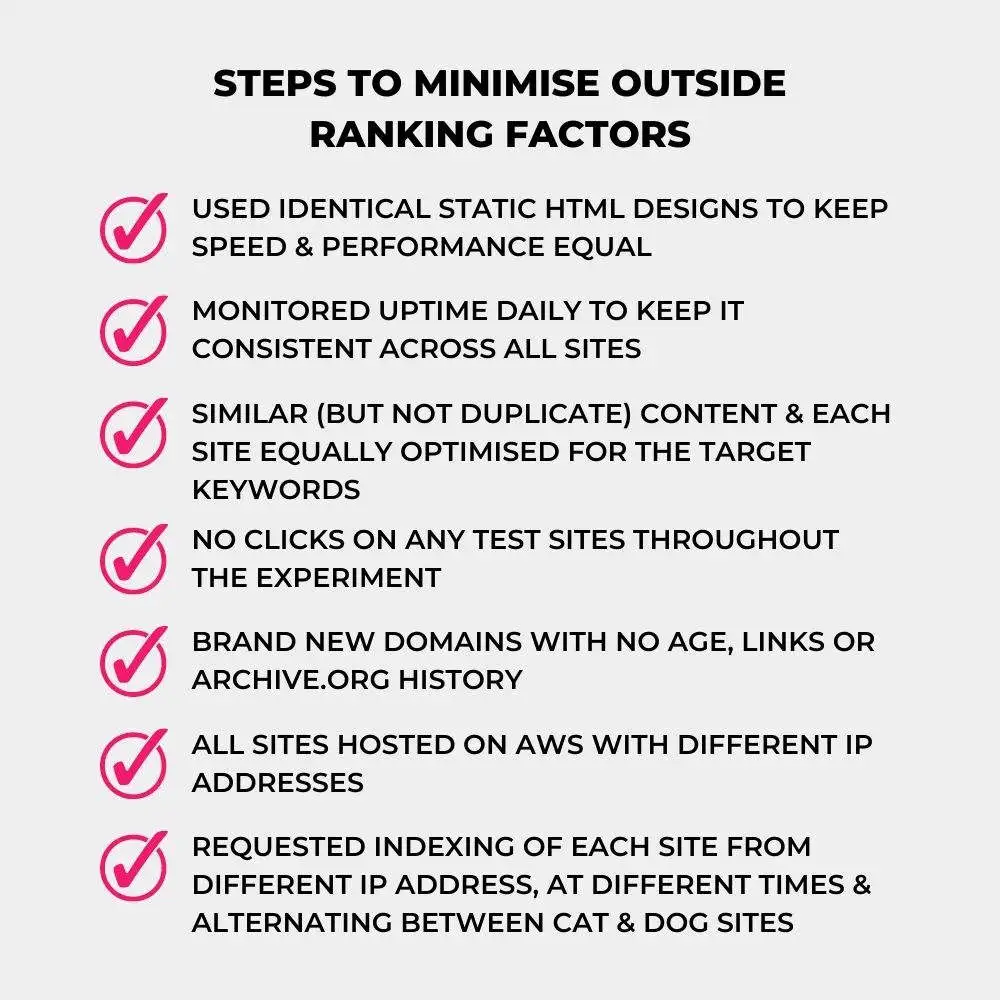

Minimising Outside Ranking Factors

As with our other experiments, isolating the factor we were looking to test (image relevancy) was vital.

When creating the experiment websites we took a number of steps to ensure factors other than the relevancy of the images on the websites would not influence the rankings.

Site Designs

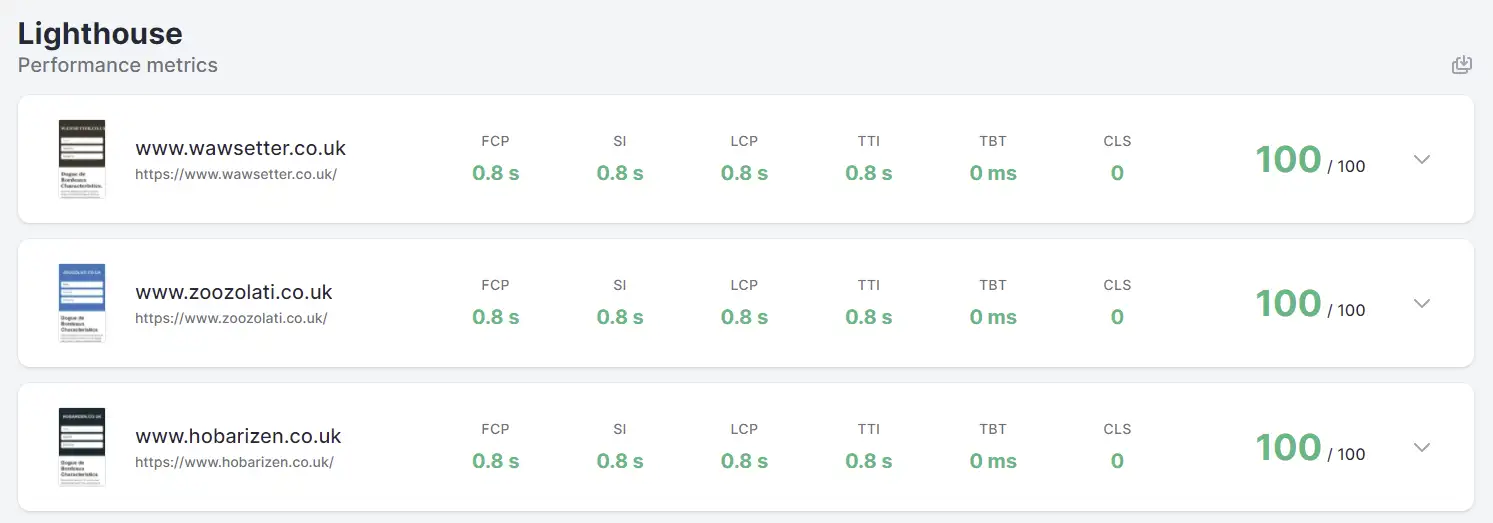

We used identical static HTML designs for every site to keep functionality, speed and performance consistent across each one.

We ran multiple Page Speed Insights tests to ensure that the speed and performance of the sites was equal.

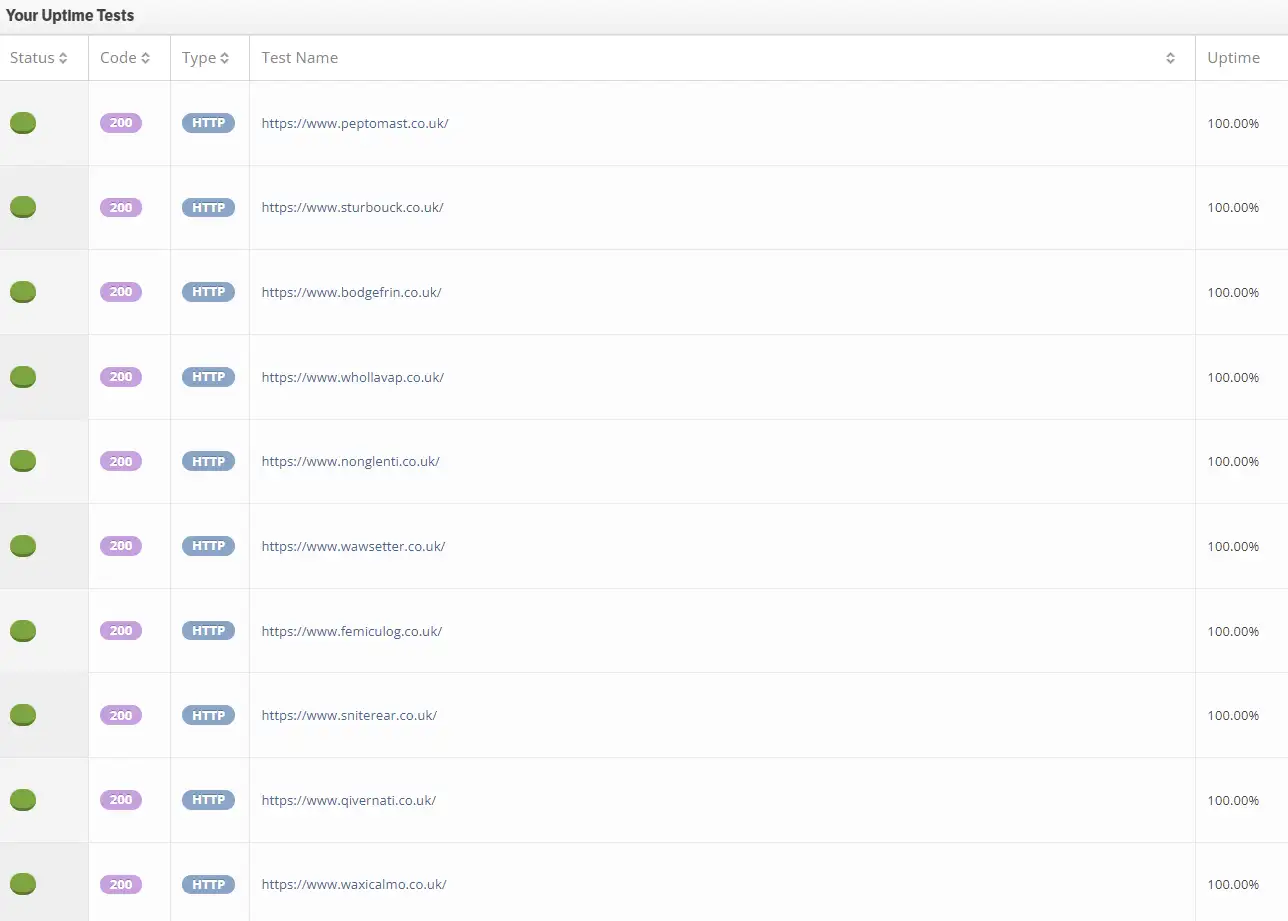

Uptime Monitoring

We monitored uptime throughout the entire experiment to ensure each site remained live consistently with no downtime.

We used StatusCake for the uptime monitoring which runs checks on every test site multiple times a day.

On-Site Optimisation

Besides the images used, all other content on the experiment sites had to be equally as optimised for the targeted keyword. We ensured the content was similar (although not identical/duplicate), contained the same number of mentions of the keyword and that these mentions were in the same position on each site.

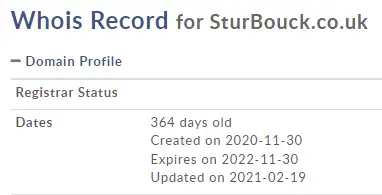

New Domains

To make sure no external signals could influence the results, we ran the experiment on 20 brand new domains.

We checked to make sure they had no links pointing to them prior to the experiment, and that there was no archive.org history on any of them.

Registered Domains From Different Tags

We even registered the various domain names from different tags to ensure there was no footprint between them.

Kept Domains Private

To mitigate the risks that any organic clicks and/or engagement data on the sites could influence the results, we kept the experiment domains private.

Only the tech SEO team and web developers knew the domain used throughout the experiment.

No Clicks on The Organic Results

In line with the above, nobody who knew the experiment domains clicked on any of the search results so as to not risk any direct or indirect signals influencing the results.

Hosting

Every site was hosted on AWS but had a different IP address. This helped keep performance and speed consistent without connecting the domains to a single server.

Indexing

All of the test sites were set to noindex and blocked from crawling in the robots.txt until we were ready to start the experiment.

Once we were ready to begin, we indexed the sites gradually and by switching to a new IP address each time. We alternated between requesting indexing of a cat or a dog site, and a cat site was the first to be submitted.

Ranking

Ranking proved difficult even for the low competition keyword selected. This was most likely because our websites had such little high-quality unique content on them and no historical signals helping them.

We did a couple of things to encourage Google to rank the domains within at least the top 250 results including adding about and contact us pages to every site (the content was once again kept similar across all the websites), and creating links from a couple of the same directories for each website (again the content from the referring pages was kept almost identical and these were published at the same time).

Rank Tracking

![]()

We used Rank Tracker to keep track of the rankings for the experiment sites.

Usually we use SEMrush however in this case the test sites didn’t rank within the first 100 results so wouldn’t be picked up by a position tracking campaign.

Rank Tracker allowed us to widen our checks to the first 1000 results and measure the performance of all 20 websites from within a single position tracking campaign.

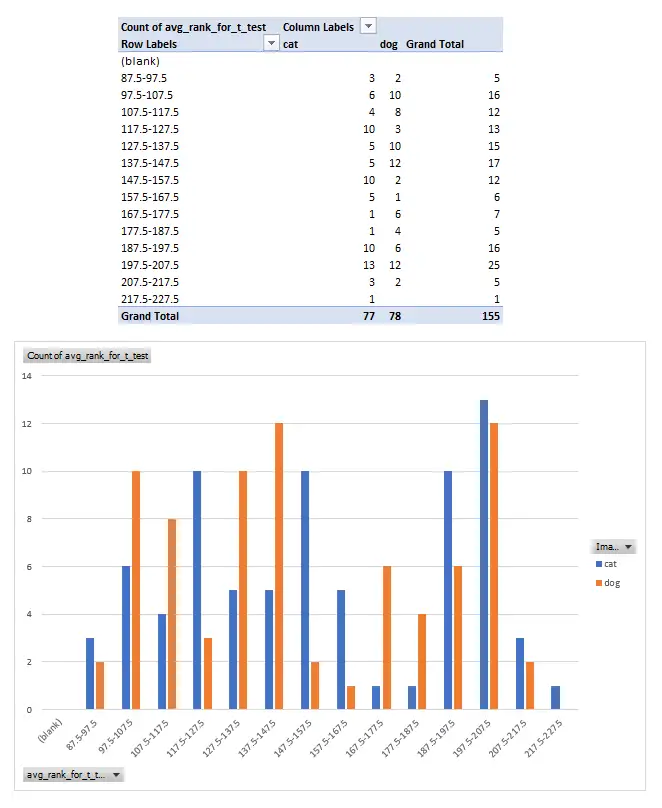

Results

After running the experiment for several months and collecting rankings regularly over this period, the data showed that the relevancy of the images on the test sites did not appear to influence rankings in any way.

Our Data Lead Ali analysed the data and looked for any statistical significance in how the sites ranked over the course of the experiment. His analysis showed that there was no correlation between more relevant images and higher rankings in the search results.

The results of this experiment are in line with what John Mueller of Google has more recently said about the importance of image relevancy in the search results.

On October 22, 2021, in a Google SEO office-hours hangout, Mueller said that for web search they don’t look at the content of images on the landing page.

Whilst our findings did show that there is no link between more relevant images and higher SEO rankings, we are in no way suggesting that you shouldn’t strive to ensure that your images are as relevant as possible to the landing pages they are being published on.

It is important to focus on creating the best website possible for your users and, ranking boost or no ranking boost, relevant unique images can play a part in that. So, when time and resources allow, this could still be worth looking at to take your content to the next level. For now, though it seems it needn’t be top of your priority list if you have other content issues which could be hurting your search performance.

Site Command

Although the rankings for the actual test keyword suggests that the image content and relevance does not influence the order of the search results, running a site command containing all of the experiment domain names with the keyword at the end does reveal only the dog images being pulled into the top SERP feature.

We wondered if this was due to the dog sites being listed first in the search so ran the same one but with the order reversed:

All but 1 of the images pulled into the SERP come from the dog sites. Perhaps because it seems much clearer that the image content is being used in the ranking of the image results, and this is being considered in the image SERP features too.

Currently, manual checks of these site commands show 7 out of 10 of the sites positioned on the first page are the Dogue de Bordeaux sites, and previous checks had this as high as 9 out of 10.

As we didn't track either of these seaches consistently throughout the experiment we can't be sure if this has always been the case, however, it does raise some questions as to why the dog sites seem to be positioned so strongly.

Image Rankings

This experiment aimed to look at the effect of image relevance in the standard search results. As such, we were not actively tracking the rankings across image search.

However, a quick search for the target keyword from within the image tab on Google.co.uk does show 7 dog images ranking before the first cat one even shows up.

Conclusion

The technical set up of this experiment proved to be one of the more tricky ones we have run, largely due to how difficult it was to get them indexed and ranking at all consistently for the target keyword. However, after several months of testing, we are pleased to be able to present some tangible data as a response to the initial hypothesis we had.

The fact that the relevancy of the images did not correlate with higher rankings in the organic search results does still pose some questions as to why not and we have a few ideas.

One potential answer is that the resources it would require to process this kind of information at scale would not be worth the potential benefits. This would be especially true if the engineers at Google and/or a machine learning algorithm had worked out that you could identify how closely relevant a landing page was for a target query, to a suitable degree of accuracy, by looking purely at other signals which require less processing resources to identify and analyse.

Another possible answer is that any algorithm that relied on such checks in any meaningful way risks opening itself up to manipulation through the artificial placement of large quantities of relevant images on otherwise irrelevant and/or low-quality pages.

Or, as is often the case in SEO, there will likely be a multitude of reasons as to why the content of the images found on a landing page is not currently being used in the ranking of the search results.

None of this is to say that image relevancy will never be used for standard search or that the relevancy of your images is not important at all. In fact, the recent comments on the product reviews update could suggest that the content of images found on a webpage might be something the search algorithms look at in the future.

As discussed above, the ultimate goal of any SEO and/or webmaster should be to create the best content possible for their users, and an important part of that will likely be ensuring that the images used in content enhances the experience using the site.

We continue to test and refine how relevance is measured in search. You can see how we assess backlink alignment using our Relevancy Rating™ tool.